History of Computers

Links to topics on this page:

Mechanical beginnings

Some mechanical control and computing devices preceded the development of the modern computer. In the early 1800’s, a French inventor named Joseph-Marie Jacquard produced a loom that could weave complex patterns into cloth. The loom was controlled automatically by reading instructions punched as holes in cards.

First

Computers

ENIAC

Transistors and ICs

Microprocessors

Microcomputers

Graphical User Interface

An American named Herman Hollerith invented machines using punch cards that were used to tabulate statistics for the 1890 US census. Hollerith eventually sold out to a company named CTR (Computer Tabulating Recording), which later became IBM (International Business Machines).

Punched cards (also marked cards) are still in limited use gathering input data for computers. You may recall the debacle in Florida during the 2000 presidential election where improperly-punched voting cards caused problems with the vote counting.

A computer punch card

(click for larger image)

More interesting from a theoretical standpoint was the work in the 1800’s by Englishman Charles Babbage. Babbage designed a mechanical computing device named the Analytical Engine in 1834. Although he was never able to build the device (he couldn't get funding to develop the precisely-machined gears, wheels, and lever systems of the machine) his ideas included many concepts that were later incorporated in modern computers.

ABC: The First Digital Electronic Computer

The first digital electronic computer was built by John Vincent Atanasoff and his assistant Clifford Berry at Iowa State University between 1937-1942. The Atanasoff Berry Computer (ABC) used punched cards for input and output, vacuum tube electronics to process data in binary format, and rotating drums of capacitors to store data.

The ABC, however, only performed one task: it was built to solve large systems of simultaneous equations (up to 29 equations with 29 unknowns), an onerous computing task commonly found in science and engineering. So, the ABC was not a general-purpose computer.

Similarly, another special-purpose electronic computer named Colossus was built in England starting in 1943 for the purpose of breaking German codes. The project was worked on by Alan Turing and Max Newman. The existence of this computer was kept secret until the 1970’s.

ENIAC: The first General-Purpose Electronic Computer

Atanasoff

Berry Computer

(click for larger image)

The first general-purpose digital electronic computer, one that could be programmed to perform a variety of calculational tasks, was the ENIAC (Electronic Numerical Integrator And Calculator). It was designed and built in the Fall of 1945 by John Mauchly and J. Presber Eckert. ENIAC was originally built to calculate ballistic tables for the US military to aim their big guns.

ENIAC was a monster of a machine, filling a large room and weighing 30 tons. It included 18,000 vacuum tubes and used 200 kilowatts of electrical power (the lights dimmed in its Philadelphia neighborhood when it was first turned on). ENIAC was the first general-purpose computer because it could be programmed (given different sets of instructions to follow) by the cumbersome procedure of reconnecting cables and flipping switches.

Later computers were much more flexible because they incorporated the idea of stored programs, conceived in 1945 by mathematician John Von Neumann (who worked on the Manhattan Project in Los Alamos). In this scheme, both the data being manipulated and the program of instructions for the computer are stored in memory. Modern computers use this same method.

Mauchly and Eckert later went on to work for the Univac division of Remmington Rand corporation. The Univac I (Universal Automatic Computer) was the first commercial computer, coming out in 1951.

Most of these early mainframes were purchased (or rented) by government bureaus, the military, research labs (such as Los Alamos National Lab), large corporations, and universities.

IBM (International Business Machines) entered the computer market in 1953 with its 701 computer. By 1960, IBM was the dominant force in the market of large mainframe computers. Smaller players in the mainframe market included Burroughs, Control Data, General Electric, Honeywell, NCR, RCA, and Univac.

Transistors and Integrated Circuits

ENIAC

computer

(click to see larger image)

Vacuum tubes consume lots of electrical power and are prone to burning out, which caused problems for early computers that used thousands of them. By 1960, the transistor replaced the vacuum tube as the electrical switching device in computers. The transistor (developed at Bell Labs by William Shockley and others in the 1950’s) is a solid-state semiconductor device typically made of silicon or germanium. It is much smaller, much more reliable, and consumes much less energy than a vacuum tube. A vacuum tube computer that previously filled a sizable portion of a room could be replaced by a transistorized computer system that filled a few cabinets. A good example of an early computer using transistors is the IBM 360, which dominated the mainframe computer market in the mid to late 1960’s.

The early 1960’s also saw the development of the microchip, or integrated circuit (IC), invented by Jack Kirby and Robert Noyce. An integrated circuit incorporates many transistors and other electrical components, all formed into a miniature circuit onto a single chip of silicon.

The invention of the integrated circuit allowed computers to become even smaller, with the whole central processing unit (CPU) of the computer fitting onto one circuit board. These minicomputers were cheaper and smaller than a mainframe (the computer was roughly the size of a drawer in a large filing cabinet). A minicomputer might cost $100,000 instead of the $1,000,000 a mainframe cost, allowing many more businesses and universities to afford their own computer systems.

The most successful minicomputers were the PDP and Vax series made by Digital Equipment Corporation (DEC). Minicomputers were multi-user systems, in much the same way as mainframe computers, but on a smaller scale.

Minicomputers are now a mainly obsolete class of computer, having been largely replaced by high-end microprocessor workstations.

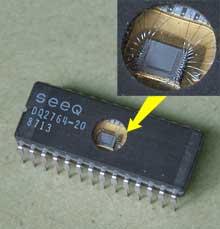

Microprocessors

Integrated

Circuit

(click for larger image)

As IC technology progressed, chip manufacturers could fit more and more circuitry onto the tiny silicon chips. By 1971, a company named Intel developed the first microprocessor (also called an MPU) that fit a whole CPU onto one microchip. The Intel 4004 processor contained 2300 transistors on a chip of silicon 1/8" x 1/16" in size.

By 1974, Intel introduced their 8080 chip, a general purpose microprocessor offering ten times the performance of the earlier MPU. It was not too long before electronics hobbyists began building small computer systems based on the rapidly improving microprocessor chips.

The First Microcomputers

The first commercially available microcomputer of note was the Altair 8800 computer sold by MITS (Micro Instrumentation & Telemetry Systems), a company founded by Dr. Ed Roberts that was based in Albuquerque, New Mexico. The computer was featured on the cover of the January 1975 issue of Popular Electronics, and was sold as a kit for $397 or assembled for $439. It used a 2 MHz Intel 8080 processor and had 256 bytes of RAM.

Remember that a computer can’t do anything without software, and some companies sprang into existence to fill this need. One small company of note was formed in Albuquerque by a Harvard dropout to provide software (a BASIC language) for the Altair computer. The founder’s name was Bill Gates, and the company he form (along with his partner Paul Allen) was Microsoft.

Dozens of companies (most of which have long since vanished) began offering microcomputers for sale, most of them based on the Intel 8080 processor and running the CP/M operating system. Other companies had proprietary operating systems, such as Radio Shack, Atari, and Commodore. Of particular note is a company named Apple founded by Steve Jobs and Steve Wozniak on April 1, 1976. Their Apple II computer was a hit, especially in the home and education markets.

Two things caused the microcomputer market to really take off in the late 1970’s and early 1980’s: spreadsheet software, and the IBM PC. Spreadsheet software (the first was Visicalc for the Apple II, written by Dan Bricklin) finally convinced business people that there was a serious use for microcomputers. The IBM PC, released by in 1981, gave a legitimacy to the microcomputer by virtue of the IBM name (remember, IBM was the maker of big mainframe computers; and it was said “Nobody ever gets fired for buying IBM”). It used a 4.77 MHz Intel 8088 processor.

Microsoft went to IBM about an operating system (OS) for their new PC. Bill Gates told them, “Wwe have an OS that will run on this new machine you are planning,” and made a deal. Microsoft did not, in fact, have such an OS, but they quickly bought one from a third party and converted it into PC-DOS. But what Gates did that was really clever was to make a deal with IBM that allowed Microsoft to also sell the OS to other companies as MS-DOS...and Microsoft’s future was set.

IBM PC sales skyrocketed and IBM dominated the market within two years, releasing the PC XT (1983) and PC AT (1984) using the Intel 80286 processor.

But, almost as quickly, IBM lost it dominance in the PC marketplace when other companies (such as Compaq) began to release “PC compatible” computers (also called “PC clones”). By 1986 the clones owned most of the market, and IBM never regained its dominance. Microsoft, on the other hand, supplied their operating systems to all PCs, becoming a huge corporation.

Graphical User Interface

Computers were traditionally very difficult to use, requiring the user to memorize and type in the necessary commands (this is called a Command Line Interface). To make computers more accessible, the Graphical User Interface (GUI) was developed. In a GUI, the user interacts with a graphical display on the screen containing icons and windows and controls. Commands are chosen from menus rather than typed in.

The GUI was developed at the Xerox Palo Alto Research Center, but the management at Xerox failed to see the usefulness of it. When Steve Jobs of Apple saw the GUI, however, he recognized its value. Apple licensed the concepts from Xerox, developed them further, and released the first successful GUI computer, the Macintosh, in 1984. Macintosh computers used the Motorola 68000 series of microprocessors (and later the PowerPC series of microprocessors).

Microsoft was also quick to realize the worth of a GUI, but its graphical user interface, Windows, was slow in displacing DOS on PCs (the first versions of Windows left much to be desired).

More details about computer hardware and software can be f0und in other parts of this tutorial.